Articles by tag: Browsers

Verifying Extensions' Compliance with Firefox's Private Browsing Mode

A Privacy-Affecting Change in Firefox 20

Modeling DOM Events

Belay Lessons: Smarter Web Programming

ADsafety

Typechecking Uses of the jQuery Language

Tags: Browsers, JavaScript, Types

Posted on 17 January 2014.Manipulating HTML via JavaScript is often a frustrating task: the APIs for doing so, known as the Document Object Model (or DOM), are implemented to varying degrees of fidelity across different browsers, leading to browser-specific workarounds that contort the code. Worse, the APIs are too low-level: they amount to an “assembly language for trees”, allowing programmers to navigate from a node to its parent, children or adjacent siblings; add, remove, or modify a node at a time; etc. And like assembly programming, these low-level operations are imperative and often error-prone.

Fortunately, developers have created libraries that abstract away from this low-level tedium to provide a powerful, higher-level API. The best-known example of these is jQuery, and its API is so markedly different from the DOM API that it might as well be its own language — a domain-specific language for HTML manipulation.

With great powerlanguage comes great responsibility

Every language has its own idiomatic forms, and therefore has its own characteristic failure modes and error cases. Our group has been studying various (sub-)languages of JavaScript— JS itself, ADsafety, private browsing violations— so the natural question to ask is, what can we do to analyze jQuery as a language?

This post takes a tour of the solution we have constructed to this problem. In it, we will see how a sophisticated technique, a dependent type system, can be specialized for the jQuery language in a way that:

- Retains decidable type-inference,

- Requires little programmer annotation overhead,

- Hides most of the complexity from the programmer, and yet

- Yields non-trivial results.

Engineering such a system is challenging, and is aided by the fact that jQuery’s APIs are well-structured enough that we can extract a workable typed description of them.

What does a jQuery program look like?

Let’s get a feel for those idiomatic pitfalls of jQuery by looking at a few tiny examples. We’ll start with a (seemingly) simple question: what does this one line of code do?

$(".tweet span").next().html()With a little knowledge of the API, jQuery’s code is quite

readable: get all the ".tweet span" nodes, get

their next() siblings, and…get the HTML code of

the first one of them. In general, a jQuery expression consists of

three parts: a initial query to select some nodes, a

sequence of navigational steps that select nearby nodes

related to the currently selected set, and finally

some manipulation to retrieve or set data on those node(s).

This glib explanation hides a crucial assumption: that there exist nodes in the page that match the initial selector in the first place! So jQuery programs’ behavior depends crucially on the structure of the pages in which they run.

Given the example above, the following line of code should behave analogously:

$(".tweet span").next().text()But it doesn’t—it actually returns the concatenated text content of all the selected nodes, rather than just the first. So jQuery APIs have behavior that depends heavily on how many nodes are currently selected.

Finally, we might try to manipulate the nodes by mapping a function

across them via jQuery’s each() method, and here we

have a classic problem that appears in any language: we must ensure

that the mapped function is only applied to the correct sorts of

HTML nodes.

Our approach

The examples above give a quick flavor of what can go wrong:

- The final manipulation might not be appropriate for the type of nodes actually in the query-set.

- The navigational steps might “fall off the edge of the tree”, navigating by too many steps and resulting in a vacuous query-set.

- The initial query might not match any nodes in the document, or match too many nodes.

Our tool of choice for resolving all these issues is a type system for JavaScript. We’ve used such a system before to analyze the security of Firefox browser extensions. The type system is quite sophisticated to handle JavaScript idioms precisely, and while we take advantage of that sophistication, we also completely hide the bulk of it from the casual jQuery programmer.

To adapt our prior system for jQuery, we need two technical insights: we define multiplicities, a new kind that allows us to approximate the size of a query set in its type and ensure that APIs are only applied to correctly-sized sets, and we define local structure, which allows developers to inform the type system about the query-related parts of the page.

Technical details

Multiplicities

Typically, when type system designers are confronted with the need to keep track of the size of a collection, they turn to a dependently typed system, where the type of a collection can depend on, say, a numeric value. So “a list of five booleans” has a distinct type from “a list of six booleans”. This is very precise, allowing some APIs to be expressed with exactitude. It does come at a heavy cost: most dependent type systems lose the ability to infer types, requiring hefty programmer annotations. But is all this precision necessary for jQuery?

Examining jQuery’s APIs reveals that its behavior can be broken down into five cases: methods might require their invocant query-set contain

- Zero elements of any type

T, writen0<T> - One element of some type

T, written1<T> - Zero or one elements of some type

T, written01<T> - One or more elements of some type

T, written1+<T> - Any number (zero or more) elements of some type

T, written0+<T>

These cases effectively abstract the exact size of a collection into a simple, finite approximation. None of jQuery’s APIs actually care if a query set contains five or fifty elements; all the matters is that it contains one-or-more. And therefore our system can avoid the very heavyweight onus of a typical dependent type system, instead using this simpler abstraction without any loss of necessary precision.

Moreover, we can

manipulate these cases using interval arithmetic: for

example, combining one collection of zero-or-one elements with

another collection containing exactly one element yields a

collection of one-or-more elements: 01<T> + 1<T> =

1+<T>. This is just interval addition.

jQuery’s APIs all effectively map some behavior across a queryset.

Consider the next() method: given a collection of at

least one element, it transforms each element into at most one

element (because some element might not have any next sibling). The

result is a collection of zero-or-more elements (if every element

does not have a next sibling).

Symbolically: 1+<01<T>> = 0+<T>. This

is just interval multiplication.

We can use these two operations to describe the types of all of

jQuery’s APIs. For example, the next() method can be

given the type Forall T, 1+<T> ->

0+<@next<T>>.

Local structure

Wait — what’s @next? Recall that we need to

connect the type system to the content of the page. So we ask

programmers to define local structures that describe the

portions of their pages that they intend to query. Think of them as

“schemas for page fragments”: while it is not reasonable

to ask programmers to schematize parts of the page they neither control

nor need to manipulate (such as 3rd-party ads), they

certainly must know the structure of those parts of the page that they

query! A local structure for, say, a Twitter stream might say:

(Tweet : Div classes = {tweet} optional = {starred}

(Author : Span classes = {span})

(Time : Span classes = {time})

(Content : Span classes = {content})

Read this as “A Tweet is a Div

that definitely has class tweet and might have

class starred. It contains three children elements in order:

an Author, a Time, and

a Content. An Author is

a Span with class span…”

(If you are familiar with templating libraries like mustache.js, these local structures might look familiar: they are just the widgets you would define for template expansion.)

From these definitions, we can compute crucial relationships: for

instance, the @next sibling of an Author

is a Time. And that in turn completes our

understanding of the type for next() above: for

any local structure

type T, next() can be called on a

collection of at least one T and will

return collection of zero or more elements that are

the @next siblings of Ts.

Note crucially that while the uses of @next

are baked into our system, the function itself is not

defined once-and-for-all for all pages: it is computed from the

local structures that the programmer defined. In this way,

we’ve parameterized our type system. We imbue it with

knowledge of jQuery’s fixed API, but leave a hole for programmers to

provide their page-specific information, and

thereby specialize our system for their code.

Of course, @next is not the only function we compute

over the local structure. We can

compute @parents, @siblings, @ancestors,

and more. But all of these functions are readily deducible from the

local structure.

Ok, so what does this all do for me?

Continuing with our Tweet example above, our system provides the following output for these next queries:// Mistake made: one too many calls to .next(), so the query set is empty

$(".tweet").children().next().next().next().css("color", "red")

// ==> ERROR: 'css' expects 1+<Element>, got 0<Element>

// Mistake made: one too few calls to .next(), so the query set is too big

$(".tweet #myTweet").children().next().css("color")

// ==>; ERROR: 'css' expects 1<Element>, got 1+<Author+Time>

// Correct query

$(".tweet #myTweet").children().next().next().css("color")

// ==> Typechecks successfullyThe big picture

We have defined a set of types for the jQuery APIs that captures

their intended behavior. These types are expressed using helper

functions such as @next, whose behavior is specific to

each page. We ask programmers merely to define the local structures

of their page, and from that we compute the helper functions we

need. And finally, from that, our system can produce type errors

whenever it encounters the problematic situations we listed above.

No additional programmer effort is needed, and the type errors

produced are typically local and pertinent to fixing buggy code.

Further reading

Obviously we have elided many of the nitty-gritty details that make our system work. We’ve written up our full system, with more formal definitions of the types and worked examples of finding errors in buggy queries and successfully typechecking correct ones. The writeup also explains some surprising subtleties of the type environment, and proposes some API enhancements to jQuery that were suggested by difficulties in engineering and using the types we defined.

Verifying Extensions' Compliance with Firefox's Private Browsing Mode

Tags: Browsers, JavaScript, Programming Languages, Security, Types, Verification

Posted on 19 August 2013.All modern browsers now support a “private browsing mode”, in which the browser ostensibly leaves behind no traces on the user's file system of the user's browsing session. This is quite subtle: browsers have to handle caches, cookies, preferences, bookmarks, deliberately downloaded files, and more. So browser vendors have invested considerable engineering effort to ensure they have implemented it correctly.

Firefox, however, supports extensions, which allow third party code to run with all the privilege of the browser itself. What happens to the security guarantee of private browsing mode, then?

The current approach

Currently, Mozilla curates the collection of extensions, and any extension must pass through a manual code review to flag potentially privacy-violating behaviors. This is a daunting and tedious task. Firefox contains well over 1,400 APIs, of which about twenty are obviously relevant to private-browsing mode, and another forty or so are less obviously relevant. (It depends heavily on exactly what we mean by the privacy guarantee of “no traces left behind”: surely the browser should not leave files in its cache, but should it let users explicitly download and save a file? What about adding or deleting bookmarks?) And, if the APIs or definition of private-browsing policy ever change, this audit must be redone for each of the thousands of extensions.

The asymmetry in this situation should be obvious: Mozilla auditors should not have to reconstruct how each extension works; it should be the extension developers' responsibility to convince the auditor that their code complies with private-browsing guarantees. After all, they wrote the code! Moreover, since auditors are fallible people, too, we should look to (semi-)automated tools to lower their reviewing effort.

Our approach

So what property, ultimately, do we need to confirm about an extension's code to ensure its compliance? Consider the pseudo-code below, which saves the current preferences to disk every few minutes:

var prefsObj = ...

const thePrefsFile = "...";

function autoSavePreferences() {

if (inPivateBrowsingMode()) {

// ...must store data only in memory...

return;

} else {

// ...allowed to save data to disk...

var file = openFile(thePrefsFile);

file.write(prefsObj.tostring());

}

}

window.setTimeout(autoSafePreferences, 3000);The key observation is that this code really defines two programs that happen to share the same source code: one program runs when the browser is in private browsing mode, and the other runs when it isn't. And we simply do not care about one of those programs, because extensions can do whatever they'd like when not in private-browsing mode. So all we have to do is “disentangle” the two programs somehow, and confirm that the private-browsing version does not contain any file I/O.

Technical insight

Our tool of choice for this purpose is a type system for JavaScript. We've used such a system before to analyze the security of the ADsafe sandbox. The type system is quite sophisticated to handle JavaScript idioms precisely, but for our purposes here we need only part of its expressive power. We need three pieces: first, three new types; second, specialized typing rules; and third, an appropriate type environment.

- We define one new primitive type:

Unsafe. We will ascribe this type to all the privacy-relevant APIs. - We use union types to define

Ext, the type of “all private-browsing-safe extensions”, namely: numbers, strings, booleans, objects whose fields areExt, and functions whose argument and return types areExt. Notice thatUnsafe“doesn’t fit” intoExt, so attempting to use an unsafe function, or pass it around in extension code, will result in a type error. - Instead of defining

Boolas a primitive type, we will instead defineTrueandFalseas primitive types, and defineBoolas their union.

- If an expression has some union type, and only one component

of that union actually typechecks, then we optimistically say

that the expression typechecks even with the whole union type.

This might seem very strange at first glance: surely, the

expression

5("true")shouldn't typecheck? But remember, our goal is to prevent privacy violations, and the code above will simply crash---it will never write to disk. Accordingly, we permit this code in our type system. - We add special rules for typechecking if-expressions. When

the condition typechecks at type

True, we only check the then-branch; when the condition typechecks at typeFalse, we only check the else-branch. (Otherwise, we check both branches as normal.)

- We give all the privacy-relevant APIs the

type

Unsafe. - We give the API

inPrivateBrowsingMode()the typeTrue. Remember: we just don't care what happens when it's false!

Put together, what do all these pieces achieve?

Because Unsafe and Ext are disjoint from

each other, we can safely segregate any code into two pieces that

cannot communicate with each other. By carefully initializing the

type environment, we make Unsafe precisely delineate

the APIs that extensions should not use in private browsing mode.

The typing rules for if-expressions plus the type

for inPrivateBrowsingMode() amount to disentangling the

two programs from each other: essentially, it implements dead-code

elimination at type-checking time. Lastly, the rule about union

types makes the system much easier for programmers to use, since they

do not have to spend any effort satisfying the typechecker about

properties other than this privacy guarantee.

In short, if a program passes our typechecker, then it must not call any privacy-violating APIs while in private-browsing mode, and hence is safe. No audit needed!

Wait, what about exceptions to the policy?

Sometimes, extensions have good reasons for writing to disk even

while in private-browsing mode. Perhaps they're updating their

anti-phishing blacklists, or they implement a download-helper that

saves a file the user asked for, or they are a bookmark manager. In

such cases, there simply is no way for the code to typecheck. As in

any type system, we provide a mechanism to break out of the type

system: an unchecked typecast. We currently write such casts

as cheat(T). Such casts must be checked by a human

auditor: they are explicitly marking the places where the extension

is doing something unusual that must be confirmed.

(In our original version, we took our cue from Haskell and wrote

such casts as unsafePerformReview, but sadly that is

tediously long to write.)

But does it work?

Yes.

We manually analyzed a dozen Firefox extensions that had already

passed Mozilla's auditing process. We annotated the extensions with

as few type annotations as possible, with the goal of forcing the

code to pass the typechecker, cheating if necessary.

These annotations found five extensions that violated

the private-browsing policy: they could not be typechecked without

using cheat, and the unavoidable uses

of cheat pointed directly to where the extensions

violated the policy.

Further reading

We've written up our full system, with more formal definitions of the types and worked examples of the annotations needed. The writeup also explains how we create the type environment in more detail, and what work is necessary to adapt this system to changes in the APIs or private-browsing implementation.

A Privacy-Affecting Change in Firefox 20

Posted on 10 April 2013.

Attention, privacy-conscious Firefox users! Firefox has supported private browsing mode (PBM) for a long time, and Mozilla's guidelines for manipulating privacy sensitive data have not changed...but the implementation of PBM has, with potentially surprising consequences. Previously innocent extensions may now be leaking private browsing information. Read on for details.

Global Private-Browsing Mode: Easy!

In earlier versions of Firefox, PBM was an all-or-nothing affair: either all currently open windows were in private mode, or none of them were. This made handling sensitive data easy; the essential logic for privacy-aware extensions is simply:

if (inPBM)

...must store data only in memory...

else

...allowed to save data to disk...(For the technically curious reader, there were some additional steps to take if extension code used shared modules: they additionally had to listen to events signalling exit from PBM, to flush any sensitive data from memory. This was not terribly hard; trying to "do the right thing" would generally work.)

Per-window Private Browsing: Trickier!

However, in Firefox 20, PBM is now a per-window setting. This means that both public and private windows can be open simultaneously. Now, the precautions above are not sufficient. Consider a session-management extension, that periodically saves all open windows and tabs:

window.setInterval(3000, function() {

if (inPBM) return;

var allWindowsAndTabs = enumerateAllWindowsAndTabs();

save(allWindowsAndTabs);

});

Most likely, this code internally uses Firefox's

nsIWindowMediator API to produce the enumeration. The

trouble is, that API does exactly what it claims: it enumerates

all windows—public and private—regardless of the

privacy status of the calling window. In particular, suppose that

both public and private windows were open simultaneously, and the

callback above ran in one of the public windows. Then

inPBM would be false, and so the rest of the

function would continue, enumerate all windows, and save them: a clear

violation of private browsing, even though no code was running in the

private browsing windows!

This code was perfectly safe in earlier versions of Firefox, because the possibility of having private and public windows open simultaneously just could not occur. This example demonstrates the need to carefully audit interactions between seemingly unrelated APIs, features, and modes—ideally, mechanically.

Takeaway Lessons

Private browsing “mode” is no longer as modal as it used to be. Privacy-conscious users need to take a careful look at the extensions they use—especially ones that observe browser-wide changes, like the session-manager example above—and double-check that they appear to behave properly with the new private-browsing changes, or (better yet) have been updated to support PBM explicitly.

Privacy-conscious developers need to take a careful look at their code and ensure that it's robust enough to handle these changed semantics for PBM, particularly in all code paths that occur after a check for PBM has returned false.

Modeling DOM Events

Tags: Browsers, JavaScript, Semantics

Posted on 17 July 2012.In previous posts, we’ve talked about our group’s work on providing an operational semantics for JavaScript, including the newer features of the language. While that work is useful for understanding the language, most JavaScript programs don’t run in a vacuum: they run in a browser, with a rich API to access the contents of the page.

That API, known as the Document Object Model (or DOM), consists of several parts:

- A graph of objects encoding the structure of page (This graph is optimistically called a "tree" since the HTML markup is indeed tree-shaped, but this graph has extra pointers between objects.),

- Methods to manipulate the HTML tree structure,

- A sophisticated event model to allow scripts to react to user interactions.

What makes this event programming so special?

To a first approximation, the execution of every web page looks roughly like: load the markup of the page, load scripts, set up lots of event handlers … and wait. For events. To fire. Accordingly, to understand the control flow of a page, we have to understand what happens when events fire.

Let’s start with this:

<div id="d1">

In outer div

<p id="p1">

In paragraph in div.

<span id="s1" style="background:white;">

In span in paragraph in div.

</span>

</p>

</div>

<script>

document.getElementById("s1").addEventListener("click",

function() { this.style.color = "red"; });

</script>

If you click on the text "In span in paragraph in div"

the event listener that gets added to element span#s1 is

triggered by the click, and turns the text red. But consider the

slightly more complicated example:

<div id="d2">

In outer div

<p id="p2">

In paragraph in div.

<span id="s2" style="background:white;">

In span in paragraph in div.

</span>

</p>

</div>

<script>

document.getElementById("d2").addEventListener("click",

function() { this.style.color = "red"; });

document.getElementById("s2").addEventListener("click",

function() { this.style.color = "blue"; });

</script>

Now, clicking anywhere in the box will turn all the text red. That

makes sense: we just clicked on the <div>

element, so its listener fires. But clicking on the <span> will turn

it blue and still turn the rest red. Why? We didn’t click on

the <div>! Well, not directly…

The key feature of event dispatch, as implemented for the DOM, is that

it takes advantage of the page structure. Clicking on an element of

the page (or typing into a text box, moving the mouse over an

element, etc.) will cause an event to fire "at" that element: the

element is the target of the event, and any event listener

installed for that event on that target node will be called. But in

addition, the event will also trigger event listeners on

the ancestors of the target node: this is called

the dispatch path. So in the example above,

because div#d2 is an ancestor of span#s2,

its event listener is also invoked, turning the text red.

What Could Possibly Go Wrong?

In a word: mutation. The functions called as event listeners are arbitrary JavaScript code, which can do anything they want to the state of the page, including modifying the DOM. So what might happen?

- The event listener might move the current target in the page. What happens to the dispatch path?

- The event listener adds (or removes) other listeners for the event being dispatched. Should newly installed listeners be invoked before or after existing ones? Should those listeners even be called?

- The event listener tries to cancel event dispatch. Can it do so?

- The listener tries to (programmatically) fire another event while the current one is active. Is event dispatch reentrant?

- There are legacy mechanisms to add event "handlers" as well as listeners. How should they interact with listeners?

Modeling Event Dispatch

Continuing our group’s theme of reducing a complicated, real-world system to a simpler operational model, we developed an idealized version of event dispatch in PLT Redex, a domain-specific language embedded in Racket for specifying operational semantics. Because we are focusing on exactly how event dispatch works, our model does not include all of JavaScript, nor does it need to—instead, it includes a miniature statement language containing the handful of DOM APIs that manipulate events. Our model does not include all the thousands of DOM properties and methods, instead including just a simplified tree-structured heap of nodes: this is all the structure we need to faithfully model the dispatch path of an event.

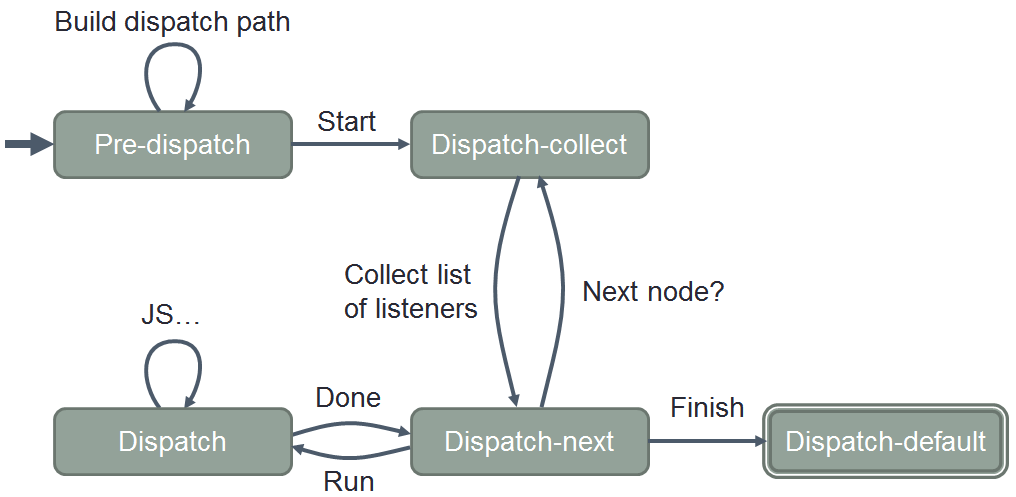

Our model is based on

the DOM Level 3

Events specification. It expresses the key behaviors of event

dispatch, and does so far more compactly than the spec: roughly 1000

lines of commented Redex code replace several pages’ worth of

(at times self-contradictory!) requirements that are spread

throughout a spec over a hundred pages long. From this concise

model, for example, we can easily extract a state machine describing

the key stages of dispatch:

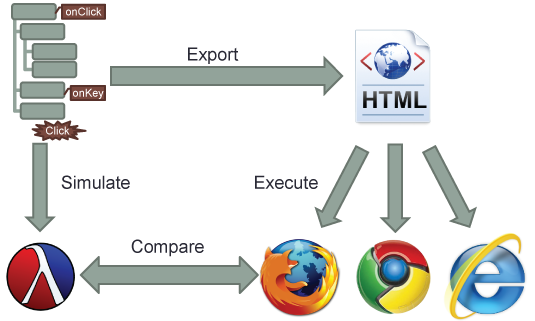

Moreover, the model is executable: Redex allows us to

construct test cases—randomly, systematically, or ad-hoc, as

we choose—and then run them through our model and see what

output it produces. Even better, we can export our tests to HTML

and JavaScript, and run them in real browsers and compare results:

What’s Done

Here’s what we’ve got so far:

- A PLT Redex model of event dispatch,

- An annotated copy of the DOM Level 3 Events spec, showing exactly which lines of our model correspond to which text in the spec, and

- A paper describing the model (and some applications of it) in greater detail.

What’s Next

Since our original JavaScript semantics was also written in Redex, we can combine our model of event dispatch with the JavaScript one, for a much higher-fidelity model of what event listeners can do in a browser setting. Then of course there are further applications, such as building a precise control-flow analysis of web pages and analyzing their code. And other uses? If you’re interested in using our model, let us know!

Belay Lessons: Smarter Web Programming

Posted on 18 December 2011.

This post comes from the keyboard of Matt Carroll, who has worked with us for the past two years. He's the main implementer of desugaring for S5, and spent this semester rebuilding and improving in-house Brown PLT web applications. He writes about his experience here.

The Brown computer science department uses a home-grown web application called Resume to conduct its faculty recruitment process. This semester, Joe and I re-wrote Resume with Belay. Belay is the product of Joe and Arjun's summer research at Google: it's an ongoing inquiry into web development best practices, specifically concerning identity, account management, and security. From my perspective (that of a novice web programmer), getting to grips with the Belay philosophy was a thought-provoking experience, and a great education in the pitfalls that a web developer must (unfortunately) bear in mind.

I Am Not My Cookies

Standard web applications make use of cookies for authentication. When you visit a site and enter your credentials, the site's response sets a session cookie in your browser. Subsequent requests to the site use the information in the cookie to determine 'who you are' and whether 'you' are allowed to do what 'your' request is trying to do. I use quotations in the prior sentence to highlight the fact that HTTP cookies are a poor method of establishing user identity. If another, malicious, web site you visit manages to trick you into sending a request to the original site, that request will contain your cookie, and the good site may treat that request as legitimate and execute it. This is the infamous cross-site request forgery (CSRF) attack.

Belay applications eschew the use of cookies, especially for authentication, and thus they are secure by design against this type of vulnerability. This begs the question: without cookies, how do Belay applications decide whether a request is authenticated? The answer may shock you (as it did me): all requests that reach request handler code are treated as legitimate. At this point, we must examine the server-side of Belay apps in greater detail.

Web Capabilities

Your everyday possibly-CSRF-vulnerable site probably has a URL scheme with

well-known endpoints that lead directly to application functionality. For

example, to post to your blog, you (typically via your browser) send a POST

request to www.blog.com/post with your cookies and the

blog body's text. The server-side handler finds your account in the database using

your cookie, checks that your account can post to that blog, and adds a new

post. If the whole surface of the site's URL space is well-known, a CSRF-ing

attacker can excercise the entirety of a user's view of the site with one

compromised cookie.

In contrast, Belay applications have few well-known URLs, corresponding to the public entry points to the site (the login and contact pages, for instance). Instead, Belay's libraries allow server-side code to dynamically generate random unique URLs and map them to request handler functions. Each of these handlers services a particular type of request for a particular set of data. The randomly generated "capability" urls are embedded in the JavaScript or markup returned to the browser. In a well-designed Belay application, each page has the minimum necessary set of capabilities to carry out its mission, and the capabilities are scoped to the minimum set of data with which they need concern themselves. After you successfully log in to a Belay site, the response will contain the set of capabilities needed by the page, and scoped to only that data which is needed by the page's functionality and associated with your user account. No cookies are necessary to identify you as a user or to authenticate your requests.

A Belay app uses its limited URL scheme as its primary security mechanism, ignoring requests unless they come along trusted capability URLs created by a prior, explicit grant. As long as we can rely on our platform's ability to generate unguessable large random numbers, attackers are out of luck. And, even if a capability URL is leaked from its page, it is scoped to only a small set of data on the server, so the vulnerability is limited. This is a much-improved situation compared to a site using cookie-based authentication---leaking a cookie leaks access to the user's entire view of the site.

Grants and Cap Handlers

Here's a Belay request handler, taken from Resume:

class GetLetterHandler(bcap.CapHandler):

def get(self, reference):

filename = get_letter_filename(reference)

return file_response(filename, 'letter')This handler simply looks up the filename associated with a reference and

returns it (using a few helper functions). Accessing a letter written by an

applicant's reference is quite a sensitive operation---letting the wrong

person access a letter would be a serious security bug. Yet,

GetLetterHandler is a two-liner with no apparent security checks

or guards. How can this be safe?

To answer this, we need to consider how a client can cause

GetLetterHandler to be invoked. The Belay server library will

only invoke this handler via capability URLs created with a grant

to GetLetterHandler. So, we can search the codebase for code

that granted such access. A quick search shows one spot:

class GetApplicantsHandler(bcap.CapHandler):

def get(self, reviewer):

applicants_json = []

for applicant in reviewer.get_applicants():

# ... some processing

refs_json = []

for ref in applicants.get_references():

refs_json.append({

'refName': ref.name,

'getLetter': bcap.grant(GetLetterHandler, ref))

})

# ... add some things to applicants_json

return bcap.bcapResponse(applicants_json) When GetApplicantsHandler is invoked, it will return a

structure that, for each applicant, shows something like:

{

name: 'Theodore Roosevelt',

getLetter:

'https://resume.cs.brown.edu/cap/f7327056-4b91-ad57-e5e4f6c514b6'

} On the server, the string

f7327056-4b91-ad57-e5e4f6c514b6 was created and mapped to

the pair of GetLetterHandler and the Reference

database item for Theodore Roosevelt. A GET request to the URL above will

return the reference letter. Note a nice feature of this setup: the server

doesn't use any information from the client, other than the capability URL, to

decide which reference's letter to return. Thus, a client cannot try

providing different id's or other parameters to explore which letters they

have access to. Only those explicitly granted are accessible.

Poking around in the codebase more, we can see that

GetApplicantsHandler is only granted to reviewers, who can only

create accounts via an email from the administrator. This reasoning is how we

convince ourselves, as developers, that we haven't screwed up and given away

the ability to see a letter to the wrong user. We do all of this without

worrying about a check on accessing the letter, instead relying on the

unguessability of the URLs generated by grant to enforce our

access restrictions.

This may seem like a new-concept overload, and indeed, I had that exact reaction at first. Over time I gained familiarity with the Belay style, and I became more and more convinced by the benefits it offers. Porting Resume became a fairly straightforward process of identifying each server-side request handler, converting it to a Belay handler, and ensuring that whatever pages needed that functionality received grants to call the handler. There were wrinkles, many due to the fact that Resume also uses Flapjax (a language/library for reactive programming in the browser). Flapjax is another Brown PLT product and it is certainly worthy of its own blog post. We had to account for the interaction between Belay's client-side library and Flapjax.

Note that Belay isn't the first place these ideas have surfaced. Belay builds on foundational research: Waterken and PLT Web Server both support cookie-less, capability-based web interactions. The Belay project addresses broader goals in identity management and sharing on the web, but we've leveraged its libraries to build a more robust system for ourselves.

At the end, the benefits of the redesigned Resume are numerous. Cookies are no longer involved. JavaScript code doesn't know or care about unique IDs for picking items out of the database. Random HTTP request probes result in a 404 response and a line in the server's log, instead of a possible data corruption. You can open as many tabs as you like, with each one logged into its own Resume account, and experience no unwanted interference. We were able to realize these improvements while re-using a significant portion of the original Resume code, unchanged.

After my experience with the Resume port, I'm certainly a Belay fan. The project has more to say about topics such as cross-site authorization, sharing, and multi-site identity management, so check out their site and stay tuned for future updates:

Belay ResearchADsafety

Tags: Browsers, JavaScript, Programming Languages, Security, Types, Verification

Posted on 13 September 2011.

A mashup is a webpage that mixes and mashes content from various sources. Facebook apps, Google gadgets, and various websites with embedded maps are obvious examples of mashups. However, there is an even more pervasive use case of mashups on the Web. Any webpage that displays third-party ads is a mashup. It's well known that third-party content can include third-party cookies; your browser can even block these if you're concerned about "tracking cookies". However, third party content can also include third-party JavaScript that can do all sorts of wonderful and malicious things (just some examples).

Is it possible to safely embed untrusted JavaScript on a page? Google Caja, Microsoft Web Sandbox, and ADsafe are language-based Web sandboxes that try to do so. Language-based sandboxing is a programming language technique that restricts untrusted code using static and runtime checks and rewriting potential dangerous calls to safe, trusted functions.

Sandboxing JavaScript, with all its corner cases, is particularly hard. A

single bug can easily break the entire sandboxing system. JavaScript sandboxes

do not clearly state their intended guarantees, nor do they clearly argue why

they are safe.

Verifying Web Sandboxes

A year ago, we embarked on a project to verify ADsafe, Douglas Crockford's Web sandbox. ADsafe is admittedly the simplest of the aforementioned sandboxes. But, we were also after the shrimp bounty that Doug offers for sandbox-breaking bugs:

Write a program [...] that calls the alert function when run on any browser. If the program produces no errors when linted with the ADsafe option, then I will buy you a plate of shrimp. (link)A year later, we've produced a USENIX Security paper on our work, which we presented in San Francisco in August. The paper discusses the many common techniques employed by Web sandboxes and discusses the intricacies of their implementations. (TLDR: JavaScript and the DOM are really hard.) Focusing on ADsafe, it precisely states what ADsafety actually means. The meat of the paper is our approach to verifying ADsafe using types. Our verification leverages our earlier work on semantics and types for JavaScript, and also introduces some new techniques:

- Check out the ★s and ☠s in our object types; we use them to type-check "scripty" features of JavaScript. ☠ marks a field as "banned" and ★ specifies the type of all other fields.

- We also characterize JSLint as a type-checker. The Widget type presented in the paper specifies, in 20 lines, the syntactic restrictions of JSLint's ADsafety checks.

Unlike conventional type systems, ours does not prevent runtime errors. After all, stuck programs are safe because they trivially don't execute any code. If you think type systems only catch "method not found" errors, you should have a look at ours.

We found bugs in both ADsafe and JSLint that manifested as type errors. We reported all of them and they were promptly fixed by Doug Crockford. A big thank you to Doug for his encouragement, for answering our many questions, and for buying us every type of shrimp dish in the house.

Learn more about ADsafety! Check out:

- The paper, code, and proofs;

- Video of Arjun presenting at USENIX Security;

- ADsafe and JSLint.