Conceptual Mutation Testing

Tags: Education, Misconceptions, Testing, Tools, User Studies

Posted on 31 October 2023.Here’s a summary of the full arc, including later work, of the Examplar project.

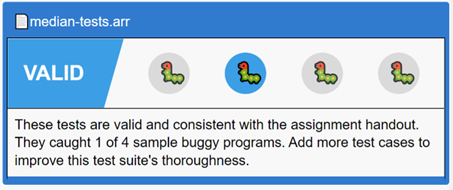

The Examplar system is designed to solve the documented phenomenon that students often misunderstand a programming problem statement and hence “solve” the wrong problem. It does so by asking students to begin by writing input/output examples of program behavior, and evaluating them against correct and buggy implementations of the solution. Students refine and demonstrate their understanding of the problem by writing examples that correctly classify these implementations.

It is, however, very difficult to come up with good buggy candidates. These programs must correspond to the problem misconceptions students are most likely to have. Students can, otherwise, end up spending too much time catching them, and not enough time on the actual programming task. Additionally, a small number of very effective buggy implementations is far more useful than either a large number or ineffective ones (much less both).

Our previous research has shown that student-authored examples that fail the correct implementation often correspond to student misconceptions. Buggy implementations based on these misconceptions circumvent many of the pitfalls of expert-generated equivalents (most notably the ‘expert blind spot’). That work, however, leaves unanswered the crucial question of how to operationalize class-sourced misconceptions. Even a modestly sized class can generate thousands of failing examples per assignment. It takes a huge amount of manual effort, expertise, and time to extract misconceptions from this sea of failing examples.

The key is to cluster these failing examples. The obvious clustering method – syntactic – fails miserably: small syntactic differences can result in large semantic differences, and vice versa (as the paper shows). Instead, we need a clustering technique that is based on the semantics of the problem.

This paper instead presents a conceptual clustering technique based on key characteristics of each programming assignment. These clusters dramatically shrink the space that must be examined by course staff, and naturally suggest techniques for choosing buggy implementation suites. We demonstrate that these curated buggy implementations better reflect student misunderstandings than those generated purely by course staff. Finally, the paper suggests further avenues for operationalizing student misconceptions, including the generation of targeted hints.

You can learn more about the work from the paper.